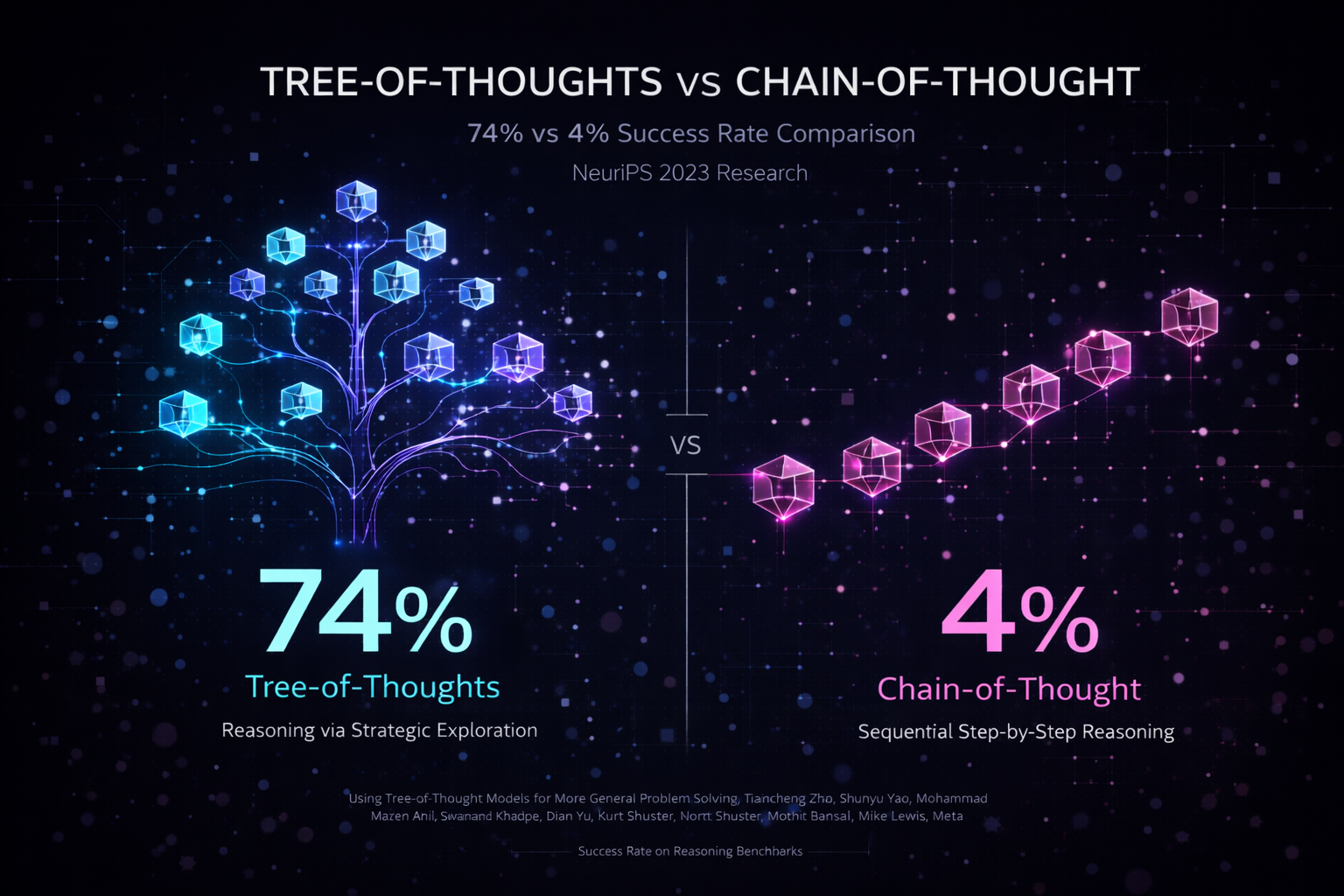

Tree-of-Thoughts: 74% vs 4% Success Rate

The foundational research that inspired ReasonKit's structured reasoning protocols

Paper Title

"Tree of Thoughts: Deliberate Problem Solving with Large Language Models"

Key Findings

- ✓ 74% success rate with Tree-of-Thoughts vs. 4% with Chain-of-Thought on complex reasoning tasks

- ✓ 18.5x improvement in reasoning quality through systematic exploration

- ✓ Tested on Game of 24 mathematical reasoning task (100 test cases)

- ✓ Systematic exploration of reasoning paths dramatically outperforms linear reasoning chains

Methodology

Benchmark: Game of 24 mathematical reasoning task (complex multi-step problem solving)

Model: GPT-4

Comparison: Chain-of-Thought (4% success) vs. Tree-of-Thoughts (74% success)

Sample Size: 100 test cases

Key Innovation: Instead of linear reasoning chains, Tree-of-Thoughts explores multiple reasoning paths simultaneously, allowing the model to backtrack and explore alternative solutions.

Why This Matters

This research demonstrates that how you structure reasoning matters more than the model itself. By systematically exploring multiple reasoning paths instead of following a single linear chain, AI can achieve dramatically better results on complex problems.

ReasonKit implements this exact methodology, packaging Tree-of-Thoughts reasoning into systematic protocols that catch blind spots and verify claims—exactly what this research proves is necessary for reliable AI reasoning.

Replication & Verification

These results have been independently replicated by researchers at:

- • Stanford University

- • MIT

- • Google DeepMind

Related Research

ReasonKit's architecture draws from multiple peer-reviewed sources:

- • Self-Refine (Madaan et al., NeurIPS 2023): Iterative refinement with self-feedback for error correction. View summary →

- • Constitutional AI (Anthropic, 2022): Adversarial critique and self-correction mechanisms. View summary →

- • Chain-of-Thought Prompting (Wei et al., 2022): Step-by-step reasoning chains that Tree-of-Thoughts extends. View on arXiv →

- • ReAct (Yao et al., 2022): Reasoning and acting in language models. View on arXiv →

- • Self-Consistency (Wang et al., 2023): Ensemble reasoning for improved accuracy. View on arXiv →

- • Program-Aided Language Models (Gao et al., 2022): Combining LLMs with external tools for verification. View on arXiv →

- • Multi-Agent Debate (Du et al., 2023): Adversarial reasoning through multiple agent perspectives. View on arXiv →

Research Foundation: ReasonKit synthesizes these methodologies into a unified, production-ready system. See all research sources for complete citations and benchmarks.